As someone who’s long been a student of the history of technology, I grew up excitedly reading accounts of the invention of the Apple II, the Macintosh, and the formation of NeXT Computer—reading about those revolutions while they were still new enough to feel alive. I used to wonder what it would have been like to be there, up close, in the middle of something that changed everything.

Then, in the most unexpected way, I found myself in exactly that position.

I’d followed the evolution of OpenAI closely, tracing it with the kind of attention you only maintain when something overlaps with a lifelong obsession. As a kid, I was fascinated by robotics and artificial intelligence. Later, I kept up with the latest developments wherever I could. When OpenAI first published results from the GPT‑2 experiment in 2019, I knew something special was happening. A year later, I wasn’t just reading about it from the outside—I was on the inside, working with the incredible team that made that possible.

It felt like a new era: complex machine learning mathematics colliding with language in a way that was suddenly practical and surprisingly powerful. And for me personally, it felt like I’d landed in the center of a Venn diagram. I was a self‑taught programmer and a novelist. I was fascinated by how the human brain works—concepts like theory of mind, critical thinking, and what it means to understand. Watching these models take shape didn’t just feel like a technical breakthrough; it felt like the beginning of a new kind of tool.

I was first given access to GPT‑3 as a developer. I started exploring—then showing the team different capabilities, different approaches, different solutions. Before long, they were asking me if I could solve certain problems, or what I thought was possible. I’d experiment, build, iterate, and then write up what I found. Some of that work ultimately became part of the documentation for GPT‑3.

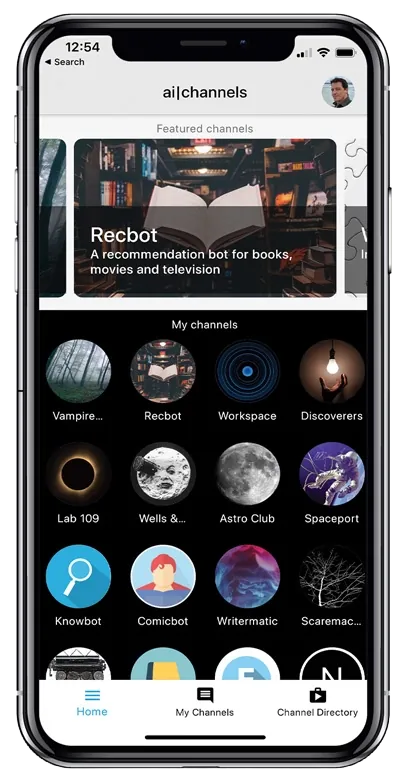

Originally, I’d planned to build an app—AI Channels—on top of GPT‑3. OpenAI even featured it in June of 2020 when they launched the GPT‑3 API. But at the time, it felt a little too ahead of its time in terms of cost and latency. When the opportunity came to work with OpenAI directly, I eagerly jumped aboard.

What followed was an experience I still pinch myself over—an experience of a lifetime. I’ve been lucky to have a few of those: having my own TV show, swimming with great white sharks at the Discovery Channel, and enjoying a rewarding career as a novelist. But being a tiny part of a bigger team—pushing on the edge of what was possible—was more rewarding than I could have imagined. I loved every minute of every day I was given a challenge, or asked, “Do you think this is possible?” I worked hard to push the envelope, version after version, as the technology evolved.

After four years, I decided I wanted to spend more time playing with the technology—experimenting, exploring, and helping other founders make use of it—so I moved on. But I stayed actively engaged. And then, last year, in 2025, OpenAI asked if I wanted to host their official podcast. It’s been a wonderful experience—an excuse to talk to many of the colleagues I worked with, and to meet many of the new people who’ve come aboard to expand what’s possible.

This guide is my attempt to gather my notes and explorations into one place—to help people who are curious about the early history of prompting, who want techniques, and who want some of the origin stories behind certain ideas. I’ll keep updating it as I continue to explore. This is still an ongoing field, and the frontier feels bigger than ever.

A quick note up front: some of the techniques you’ll see here are a bit outdated. They were developed for models that nobody uses anymore. Others still have real utility even as models have scaled. And you’ll also find early explorations I never pursued—things I found interesting, moved on from, and later realized turned out to be surprisingly useful. That’s the trade-off you make when you’re an explorer. Sometimes you find something and sprint toward the next discovery. Sometimes you follow one thread as far as it can possibly go.

One more thing I want to add, because it’s hard to explain the feeling in hindsight: at the time, we knew we were watching something really special. But the shape of how it would develop was hard to fathom. A couple patterns did emerge that, if we’d fully grasped them, might have changed the way we thought about everything.

We could see the models were going to become more capable. What I didn’t fully anticipate was how much more inexpensive they would become. I assumed prices would come down, sure—but the comparative cost of intelligence today versus where it was six years ago is insane. It’s incredible. It’s remarkable. And it’s one of the greatest achievements we’ve had so far.

And I think it’s only just beginning.

I’m also amazed by how often I use these tools in everyday life—and how often I use them to make tools. Right now I’m using an app I coded with OpenAI’s Codex to create this guide. I needed a way to gather my notes and thoughts, collect them, and then format them into posts. It’s hard for me to imagine taking on this project—on top of everything else I have going on—without these systems. At the beginning, I knew they were amplifiers.

I feel that even more today.

Andrew Mayne

Start with all posts to read linearly, or use the Chronology and sidebar navigation for alternate pathways.